Throughout my years analyzing data and testing marketing superstitions and myths, I’ve come across a consistent truth that is key to understanding any kind of marketing data—the difference between self-reported data and observational data. That is, what people tell you they do, and then what they actually do.

When you conduct a survey, your respondents are going to answer in specific ways. They’ll tell you what they think is true, what they want to be true and what they think you want to hear. Often, when you analyze actual behaviors through observational data, you find that what people thought they did was not actually what happened.

A few simple examples easily illuminate this problem. Imagine asking a room full of people if they think advertising is effective in influencing their purchasing decisions. Most will say no, but we know the opposite to be true. Or ask a room full of people to raise their hand if they think they’re above average at their job. The majority of hands will be up, but simple math shows us that’s impossible.

Survey data should be used as a way to understand attitudes, rather than behaviors. Read survey results as a narrative about how respondents feel about certain things, not as indicators of their actual actions.

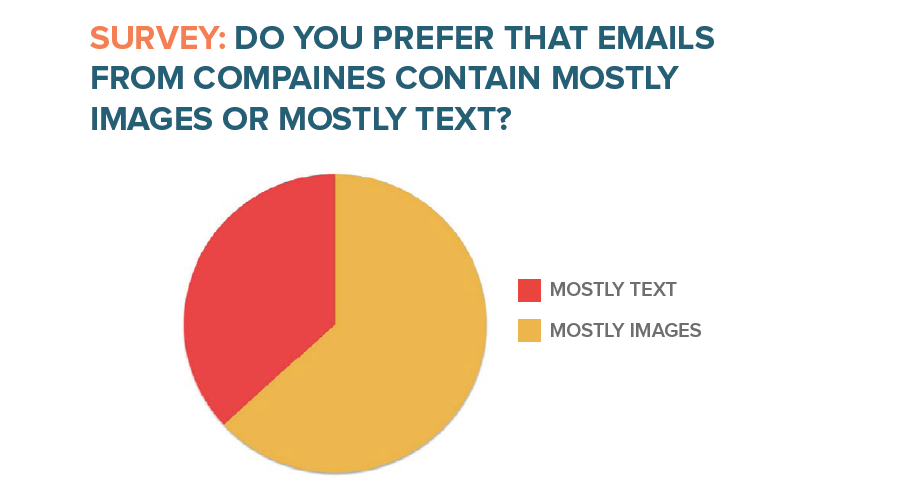

The first few data points in this report form a key example of this. On one hand we find that survey takers report preferring emails full of images, but when we look at hard observational data, we find that they actually respond better to text heavy emails.

In both the 2011 and 2014 versions of this survey, we asked respondents whether they preferred marketing emails that were mostly images or mostly text. The results from both were very similar, with nearly 2/3 of respondents saying they preferred mostly image-based emails.

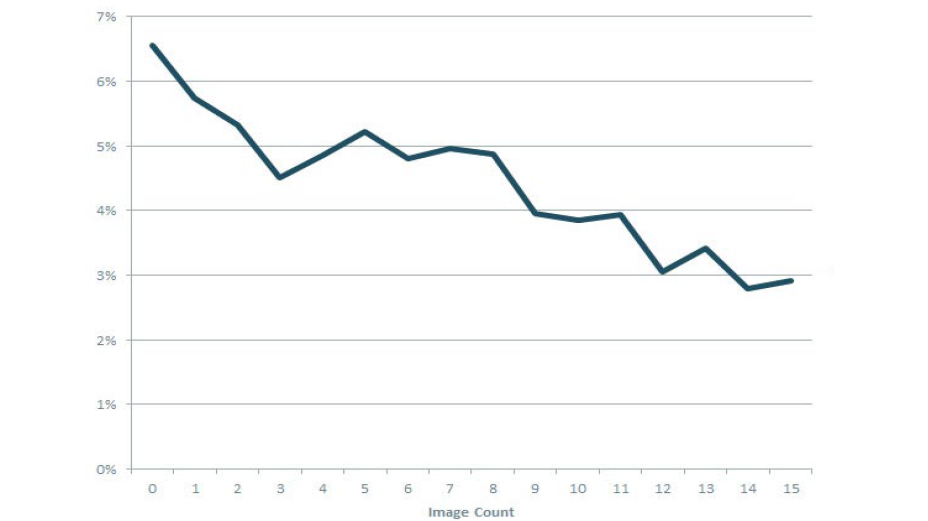

Turning to the observational data found in the dataset of HubSpot customers’ sent emails, we find the opposite to be true. As the number of images in an email

increased, the click-through rate of the emails tended to decrease. Note that there’s nearly a percent drop in CTR just from no images to one image.

This points out a difficulty in relying solely on self-reported data like surveys. When taking surveys, users often answer in ways that reflect either what they think the data collector wants to hear, or in ways that reflect what they want to think about themselves. An email with mostly images sounds more interesting than an email with mostly text when spoken about in hypothetical terms, but the reality of them is somewhat different.

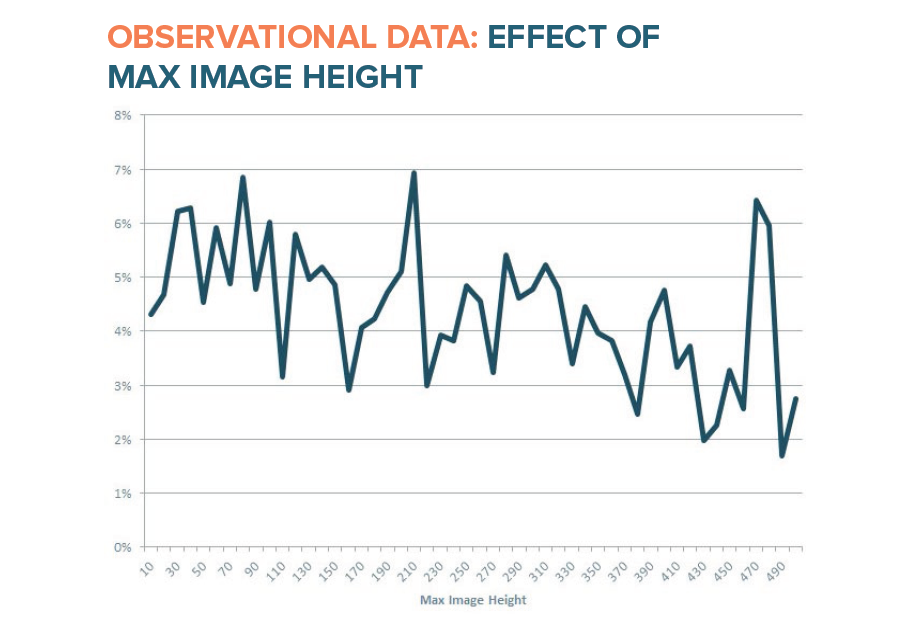

Rather than only looking at the raw number of images in an email I also looked at the size of the images. First, I looked at the height of the tallest image in the emails. I found that as the max image height of emails in our dataset increased, the CTR of the emails decreased.

With line graphs of large datasets like this one, it is important to focus on the overall trend of the line, rather than the individual bumps, which are merely

artifacts of real world data.

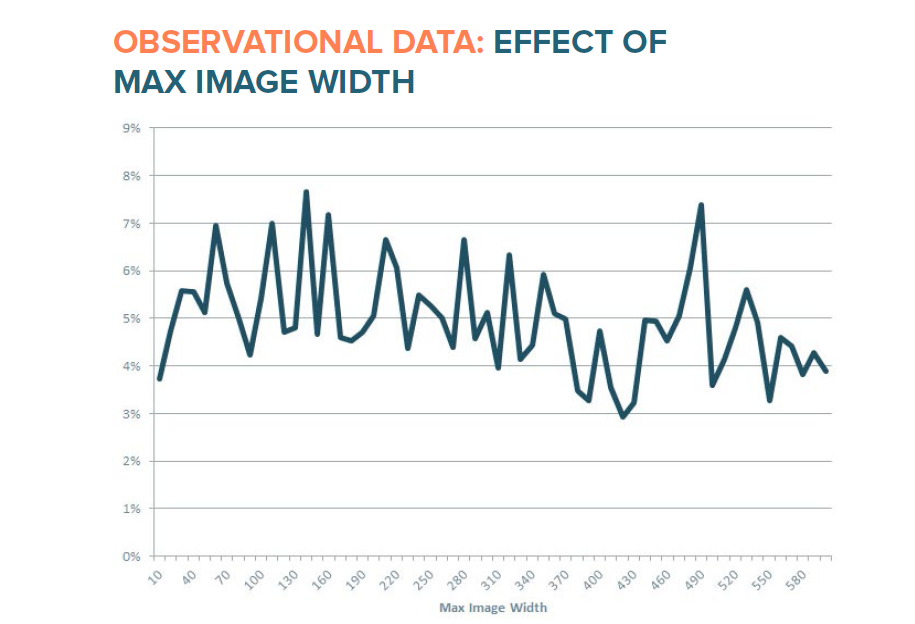

I also looked at max image width – that is, the width of the widest image in the email. The negative correlation between max image width and CTR isn’t as strong as it is between CTR and max height, but there seems to be some preference for smaller images.

Our image data should not be taken to mean that you should never use images in your email messages, only that you should experiment with various image versus text content and not just assume that your recipients only want image-heavy emails.

Read Full Report Here by Mike Volpe, CMO, HubSpot